2026-04-17

复习和预习

昨天课堂内容

- Ubuntu 系统基本管理

- Container 实践环境部署

- Container 镜像管理

- Container 容器管理

课前复习

# 准备仓库 docker-ce:containerd.io软件包

61 2026-04-17 09:13:33 curl -fsSL https://mirrors.huaweicloud.com/docker-ce/linux/ubuntu/gpg | gpg --dearmour -o /etc/apt/trusted.gpg.d/containerd.gpg

62 2026-04-17 09:13:37 cat << 'EOF' > /etc/apt/sources.list.d/docker-ce.list

deb [arch=amd64] https://mirrors.huaweicloud.com/docker-ce/linux/ubuntu noble stable

EOF

# 准备仓库 k8s:crictl 软件包

63 2026-04-17 09:13:48 curl -fsSL https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.30/deb/Release.key | gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

64 2026-04-17 09:13:53 echo "deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.30/deb/ /" > /etc/apt/sources.list.d/kubernetes.list

65 2026-04-17 09:13:57 apt update

# 部署 containerd

68 2026-04-17 09:16:40 apt install -y containerd=1.7.12-0ubuntu4

69 2026-04-17 09:17:19 ls /etc/containerd

70 2026-04-17 09:17:21 mkdir /etc/containerd

71 2026-04-17 09:17:29 containerd config default

72 2026-04-17 09:17:40 containerd config default > /etc/containerd/config.toml

# 使用旧方式配置加速

73 2026-04-17 09:17:46 vim /etc/containerd/config.toml

...

[plugins."io.containerd.grpc.v1.cri".registry]

# config_path 配置项值为未空

config_path = ""

...

# 查找 mirrors行

[plugins."io.containerd.grpc.v1.cri".registry.mirrors]

# 添加如下四行记录,注意缩进

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]

endpoint = ["https://docker.m.daocloud.io","https://09def58152000fc00ff0c00057bad7e0.mirror.swr.myhuaweicloud.com"]

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."registry.k8s.io"]

endpoint = ["https://k8s.m.daocloud.io","https://09def58152000fc00ff0c00057bad7e0.mirror.swr.myhuaweicloud.com"]

74 2026-04-17 09:19:23 systemctl restart containerd.service

# 部署crictl

90 2026-04-17 09:24:42 apt install -y cri-tools

91 2026-04-17 09:25:23 crictl config default

92 2026-04-17 09:25:26 vim /etc/crictl.yaml

93 2026-04-17 09:25:38 crictl completion bash

94 2026-04-17 09:25:48 crictl completion bash > /etc/bash_completion.d/crictl

95 2026-04-17 09:25:51 source /etc/bash_completion.d/crictl

96 2026-04-17 09:26:31 crictl config --help

97 2026-04-17 09:26:54 crictl config --set runtime-endpoint=unix:///var/run/containerd/containerd.sock

98 2026-04-17 09:27:02 cat /etc/crictl.yaml

# 使用短名称正常拉取

99 2026-04-17 09:29:02 crictl pull hello-world

100 2026-04-17 09:29:20 crictl images

# ctr 必须使用完整的镜像路径,不支持cri插件的加速

101 2026-04-17 09:29:36 ctr pull docker.io/library/hello-world

102 2026-04-17 09:29:43 ctr image pull docker.io/library/hello-world

103 2026-04-17 09:29:52 ctr image pull docker.io/library/hello-world:latest

# 部署nerdctl,配置补全

75 2026-04-17 09:20:06 rz -E

76 2026-04-17 09:20:19 tar -xf nerdctl-1.4.0-linux-amd64.tar.gz -C /usr/bin

77 2026-04-17 09:20:23 ll /usr/bin/nerdctl

78 2026-04-17 09:20:38 nerdctl completion bash

79 2026-04-17 09:20:55 #nerdctl completion bash > /etc/bash_completion.d

80 2026-04-17 09:21:01 mkdir /etc/bash_completion.d

81 2026-04-17 09:21:04 nerdctl completion bash > /etc/bash_completion.d

82 2026-04-17 09:21:09 nerdctl completion bash > /etc/bash_completion.d/nerdctl

83 2026-04-17 09:21:11 source .

84 2026-04-17 09:21:19 source /etc/bash_completion.d/nerdctl

# nerdctl 无法使用旧方式配置的镜像加速

85 2026-04-17 09:21:25 nerdctl images

86 2026-04-17 09:21:38 nerdctl pull nginx

87 2026-04-17 09:22:46 mkdir /etc/nerdctl

88 2026-04-17 09:23:08 vim /etc/nerdctl/nerdctl.toml

89 2026-04-17 09:23:55 mkdir /etc/containerd/certs.d

# nerdctl 使用新方式配置镜像加速

104 2026-04-17 09:31:09 mkdir -p /etc/containerd/certs.d

105 2026-04-17 09:31:15 mkdir -p /etc/containerd/certs.d/docker.io

106 2026-04-17 09:31:20 cat > /etc/containerd/certs.d/docker.io/hosts.toml << EOF

server = "https://registry-1.docker.io"

[host."https://docker.m.daocloud.io"]

capabilities = ["pull", "resolve"]

[host."https://09def58152000fc00ff0c00057bad7e0.mirror.swr.myhuaweicloud.com"]

capabilities = ["pull", "resolve"]

EOF

107 2026-04-17 09:31:24 mkdir -p /etc/containerd/certs.d/registry.k8s.io

108 2026-04-17 09:31:30 cat > /etc/containerd/certs.d/registry.k8s.io/hosts.toml << EOF

server = "https://registry.k8s.io"

# 首选 DaoCloud

[host."https://k8s.m.daocloud.io"]

capabilities = ["pull", "resolve"]

[host."https://09def58152000fc00ff0c00057bad7e0.mirror.swr.myhuaweicloud.com"]

capabilities = ["pull", "resolve"]

EOF

# ctr 通过--hosts-dir 使用镜像加速

109 2026-04-17 09:31:56 ctr image pull docker.io/library/hello-world:latest --hosts-dir /etc/containerd/certs.d

110 2026-04-17 09:32:10 ctr image ls

111 2026-04-17 09:32:33 ctr image ls | awk '{print $1}'

112 2026-04-17 09:32:50 nerdctl pull hello-world

113 2026-04-17 09:32:56 nerdctl pull busybox

114 2026-04-17 09:33:06 cat /etc/nerdctl/nerdctl.toml

115 2026-04-17 09:33:15 nerdctl info

116 2026-04-17 09:33:23 nerdctl version

117 2026-04-17 09:35:51 history

容器管理工具,加速配置总结:

- mirrors 配置: crictl 使用

- config_path配置:nerdctl、ctr(--hosts-dir /etc/containerd/certs.d)

今天课堂内容

- Container 网络

- Container 存储

- Container 命名空间

- Kubernetes 介绍

- Kubernetes 安装

Containerd 容器技术

nerdctl 实践

nerdctl 管理网络

Containerd 中的网络与Docker类似,所有网络接口默认都是虚拟接口。

当使用nerdctl创建容器时,nerdctl命令会创建一个名称为bridge的Linux网桥(其上有一个nerdctl0内部接口),利用了Linux虚拟网络技术,在本地主机和容器内分别创建一个虚拟接口,并让它们彼此连通(这样的一对接口叫做vethpair)。Containerd 默认指定了nerdctl0接口的IP地址和子网掩码,让主机和容器之间可以通过网桥相互通信。

示例

root@ubuntu2404:~# nerdctl run -d busybox -- sleep infinity

bab94a9f169c0305c47c247d258d90d9e25f2172ec09ecdf14c9452436ed5c15

root@ubuntu2404:~# nerdctl container ls

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

bab94a9f169c docker.io/library/busybox:latest "sleep infinity" 19 seconds ago Up busybox-bab94

root@ubuntu2404:~# nerdctl exec busybox-bab94 -- ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0@if7: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 7a:a2:3f:04:16:d7 brd ff:ff:ff:ff:ff:ff

inet 10.4.0.4/24 brd 10.4.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::78a2:3fff:fe04:16d7/64 scope link

valid_lft forever preferred_lft forever

容器内看到的网卡名:2: eth0@if7,@if7代表对端是7号网卡。

root@ubuntu2404:~# ip a

......

6: nerdctl0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether a6:4c:c0:32:6a:5c brd ff:ff:ff:ff:ff:ff

inet 10.4.0.1/24 brd 10.4.0.255 scope global nerdctl0

valid_lft forever preferred_lft forever

inet6 fe80::a44c:c0ff:fe32:6a5c/64 scope link

valid_lft forever preferred_lft forever

7: vethf9f77444@if2: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master nerdctl0 state UP group default

link/ether 76:51:da:0a:6a:a3 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::7451:daff:fe0a:6aa3/64 scope link

valid_lft forever preferred_lft forever

对应容器主机的网卡:7: vethf9f77444@if2,@if2代表对端容器内对应2号网卡。

示例:

root@ubuntu2404:~# nerdctl network ls

NETWORK ID NAME FILE

17f29b073143 bridge /etc/cni/net.d/nerdctl-bridge.conflist

host

none

root@ubuntu2404:~# nerdctl network inspect bridge

[

{

"Name": "bridge",

"Id": "17f29b073143d8cd97b5bbe492bdeffec1c5fee55cc1fe2112c8b9335f8b6121",

"IPAM": {

"Config": [

{

"Subnet": "10.4.0.0/24",

"Gateway": "10.4.0.1"

}

]

},

"Labels": {

"nerdctl/default-network": "true"

}

}

]

# 主机中nerdctl0就是容器的网关

root@ubuntu2404:~# ip addr show nerdctl0

6: nerdctl0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether a6:4c:c0:32:6a:5c brd ff:ff:ff:ff:ff:ff

inet 10.4.0.1/24 brd 10.4.0.255 scope global nerdctl0

valid_lft forever preferred_lft forever

inet6 fe80::a44c:c0ff:fe32:6a5c/64 scope link

valid_lft forever preferred_lft forever

目前 Containerd 网桥是Linux网桥,用户可以使用brctl show命令查看网桥和端口连接信息。

root@ubuntu2404:~# apt install -y bridge-utils

root@ubuntu2404:~# brctl show

bridge name bridge id STP enabled interfaces

nerdctl0 8000.a64cc0326a5c no vethf9f77444

nerdctl network 命令使用帮助

root@ubuntu2404:~# nerdctl network

Manage networks

Usage: nerdctl network [flags]

Commands:

create Create a network

inspect Display detailed information on one or more networks

ls List networks

prune Remove all unused networks

rm Remove one or more networks

Flags:

-h, --help help for network

See also 'nerdctl --help' for the global flags such as '--namespace', '--snapshotter', and '--cgroup-manager'.

nerdctl 管理存储

nerdctl 命令创建容器的时候,可以使用 -v 选项将本地目录挂载给容器实现数据持久化。

示例:

root@ubuntu2404:~# nerdctl run -d -v /data:/data busybox -- sleep infinity

6afe638b117d8d8470948b944efd2b913b0a91d366fcd3f88c48baa661c7fcf7

root@ubuntu2404:~# touch /data/f1

root@ubuntu2404:~# nerdctl exec busybox-6afe6 -- ls /data

f1

nerdctl 命令创建容器的时候,也可以使用 -v 选项指定volume。

root@ubuntu2404:~# nerdctl run -d -v data:/data busybox -- sleep infinity

dd82eea219b39c0b53257201b7528c2c52d8a886cd35108b55136c78b9525a48

root@ubuntu2404:~# nerdctl exec busybox-dd82e -- touch /data/f1

root@ubuntu2404:~# nerdctl volume ls

VOLUME NAME DIRECTORY

data /var/lib/nerdctl/1935db59/volumes/k8s.io/data/_data

root@ubuntu2404:~# ls /var/lib/nerdctl/1935db59/volumes/k8s.io/data/_data

f1

nerdctl volume 命令使用帮助

root@ubuntu2404:~# nerdctl volume

Manage volumes

Usage: nerdctl volume [flags]

Commands:

create Create a volume

inspect Display detailed information on one or more volumes

ls List volumes

prune Remove all unused local volumes

rm Remove one or more volumes

Flags:

-h, --help help for volume

See also 'nerdctl --help' for the global flags such as '--namespace', '--snapshotter', and '--cgroup-manager'.

nerdctl 管理命名空间

root@ubuntu2404:~# nerdctl namespace

Unrelated to Linux namespaces and Kubernetes namespaces

Usage: nerdctl namespace [flags]

Aliases: namespace, ns

Commands:

create Create a new namespace

inspect Display detailed information on one or more namespaces.

ls List containerd namespaces

remove Remove one or more namespaces

update Update labels for a namespace

Flags:

-h, --help help for namespace

See also 'nerdctl --help' for the global flags such as '--namespace', '--snapshotter', and '--cgroup-manager'

示例:

root@ubuntu2404:~# nerdctl namespace ls

NAME CONTAINERS IMAGES VOLUMES LABELS

k8s.io 3 2 1

默认命名空间设置:配置文件

[root@ubuntu2404 ~ 10:55:23]# vim /etc/nerdctl/nerdctl.toml

# 1. containerd socket 地址(默认位置)

address = "unix:///run/containerd/containerd.sock"

# 2. 镜像加速目录(自动读取 certs.d)

hosts_dir = ["/etc/containerd/certs.d", "/etc/docker/certs.d"]

# 3. 默认命名空间(k8s 用 k8s.io)

namespace = "k8s.io"

默认命名空间设置:环境变量

root@ubuntu2404:~# echo 'export CONTAINERD_NAMESPACE=k8s.io' >> /etc/bash_completion.d/nerdctl

Kubernetes 介绍

应用部署发展过程

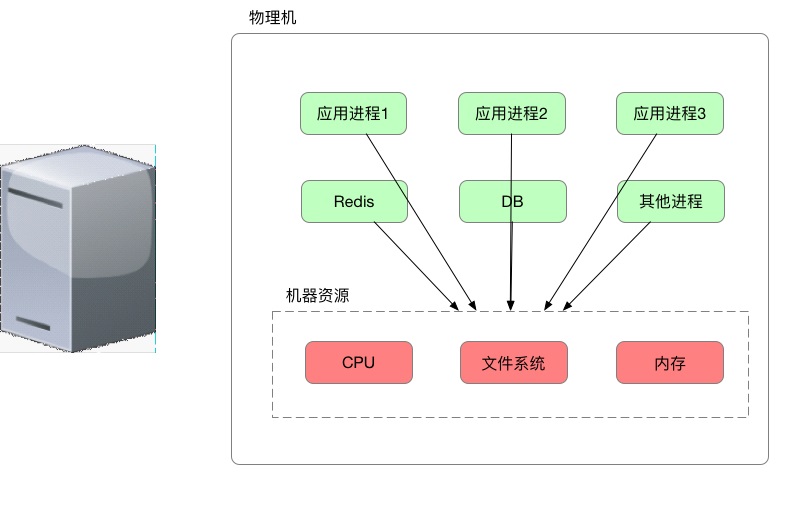

- 传统部署时代:企业在物理服务器上运行应用程序。无法为物理服务器中的应用程序定义资源边界,这会导致资源分配问题。例如,如果在物理服务器上运行多个应用程序,则可能会出现一个应用程序占用大部分资源的情况,结果可能导致其他应用程序的性能下降。一种解决方案是在不同的物理服务器上运行每个应用程序,但是由于资源利用不足而无法扩展,并且组织维护许多物理服务器的成本很高。

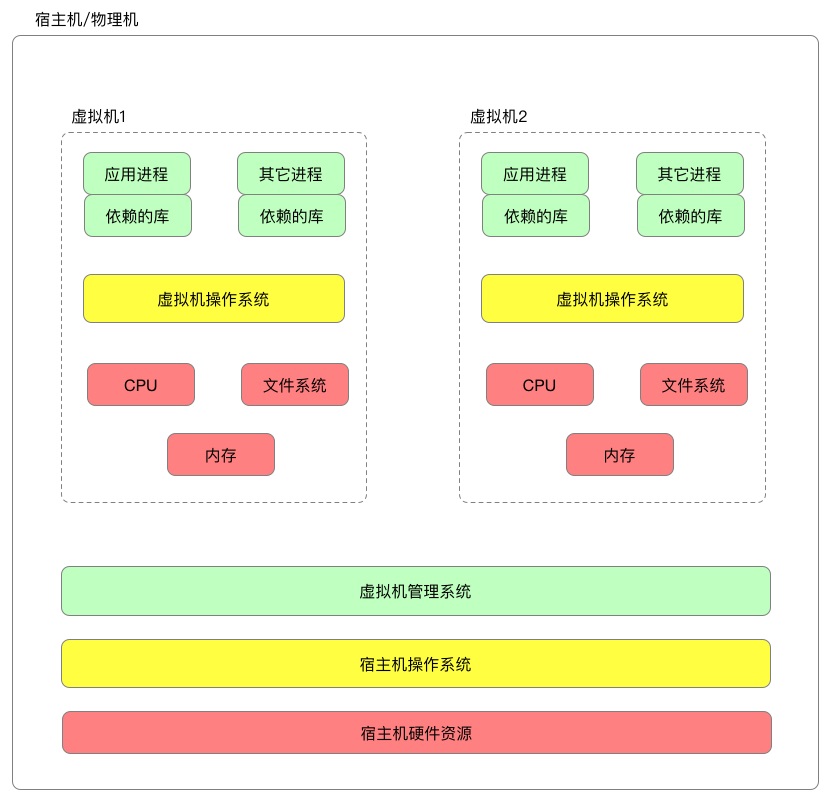

- 虚拟化部署时代:作为解决方案,引入了虚拟化功能,它允许您在单个物理服务器的CPU上运行多个虚拟机(VM)。虚拟化功能允许应用程序在VM之间隔离,并提供安全级别,因为一个应用程序的信息不能被另一应用程序自由地访问。因为虚拟化可以轻松地添加或更新应用程序、降低硬件成本等等,所以虚拟化可以更好地利用物理服务器中的资源,并可以实现更好的可伸缩性。每个VM是一台完整的计算机,在虚拟化硬件之上运行所有组件,包括其自己的操作系统。

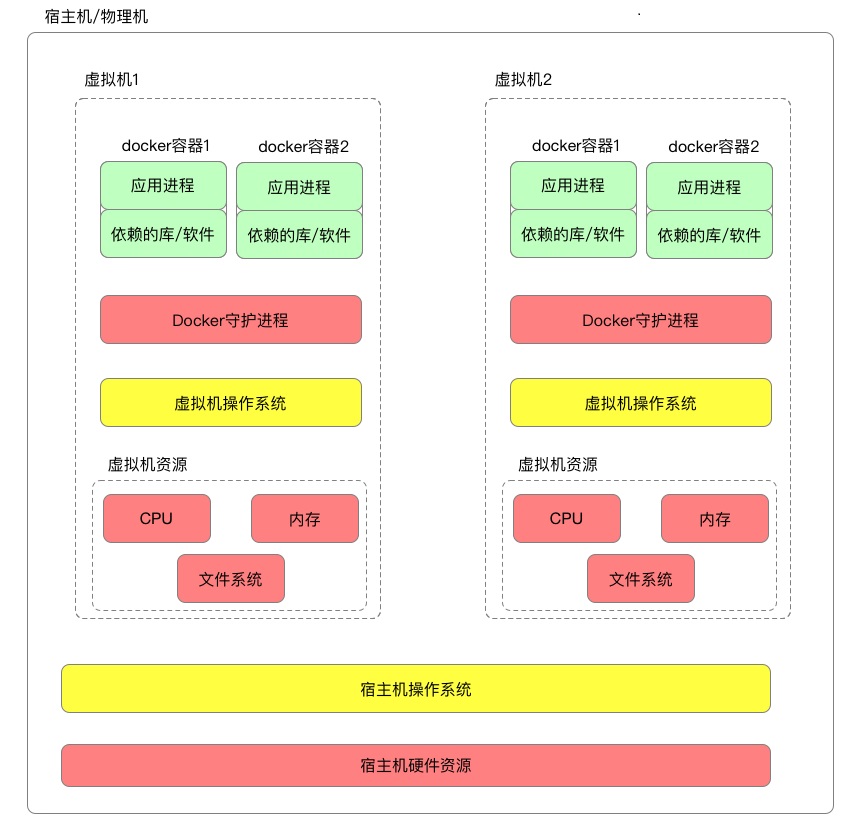

- 容器部署时代:容器类似于VM,但是它们具有轻量级的隔离属性,可以在应用程序之间共享操作系统(OS)。因此,容器被认为是轻量级的。容器与VM类似,具有自己的文件系统、CPU、内存、进程空间等。由于它们与基础架构分离,因此可以跨云和OS分发进行移植。

容器因具有许多优势而变得流行起来。下面列出了容器的一些好处:

-

敏捷应用程序的创建和部署:与使用VM镜像相比,提高了容器镜像创建的简便性和效率。

-

持续开发、集成和部署:通过快速简单的回滚(由于镜像不可变性),提供可靠且频繁的容器镜像构建和部署。

- 关注开发与运维的分离:在构建/发布时而不是在部署时创建应用程序容器镜像,从而将应用程序与基础架构分离。

- 跨开发、测试和生产的环境一致性:在便携式计算机上与在云中相同地运行。

- 云和操作系统分发的可移植性:可在Ubuntu、RHEL、CoreOS、本地、Google Kubernetes Engine和其他任何地方运行。

- 以应用程序为中心的管理:提高抽象级别,从在虚拟硬件上运行OS到使用逻辑资源在OS上运行应用程序。

- 松散耦合、分布式、弹性、解放的微服务:应用程序被分解成较小的独立部分,并且可以动态部署和管理-而不是在一台大型单机上整体运行。

- 资源隔离:可预测的应用程序性能。

- 资源利用:高效率和高密度。

Kubernetes 前世今生

Kubernetes 的名字来自希腊语,意思是“舵手” 或 “领航员”。K8s是将8个字母“ubernete”替换为“8”的缩写。

据说Google的数据中心里运行着20多亿个容器,而且Google十年前就开始使用容器技术。最初,Google开发了一个叫Borg的系统(现在命名为Omega)来调度如此庞大数量的容器和工作负载。在积累了这么多年的经验后,Google决定重写这个容器管理系统,并将其贡献到开源社区,让全世界都能受益。这个项目就是Kubernetes(K8s)。简单地讲,Kubernetes是Google Omega的开源版本。

2014 年 6 月,谷歌云计算专家埃里克·布鲁尔(Eric Brewer)在旧金山的发布会为这款新的开源工具揭牌。

2015 年 5 月,Kubernetes 在 Google 上的的搜索热度就已经超过了 Mesos 和 Docker Swarm,从那儿之后更是一路飙升,将对手甩开了十几条街。

2015 年 7 月 22 日K8S迭代到 v 1.0并正式对外公布。

2017 年 9 月,Mesosphere 宣布 支持 Kubernetes;10 月,Docker 宣布将在新版本中加入对 Kubernetes 的原生支持。至此,容器编排引擎领域的三足鼎立时代结束,Kubernetes 赢得全面胜利。

目前,AWS、Azure、Google、阿里云、腾讯云等主流公有云提供的是基于 Kubernetes 的容器服务;Rancher、CoreOS、IBM、Mirantis、Oracle、Red Hat、VMWare 等无数厂商也在大力研发和推广基于 Kubernetes 的容器 CaaS 或 PaaS 产品。可以说,Kubernetes 是当前容器行业最炙手可热的明星。

Kubernetes 是什么

Kubernetes是一个容器集群管理系统,通过Kubernetes你可以:

- 快速部署应用

- 快速扩展应用

- 无缝对接新的应用功能

- 节省资源,优化硬件资源的使用

Kubernetes 特点

- 可移植: 支持公有云,私有云,混合云,多重云(multi-cloud)

- 可扩展: 模块化, 插件化, 可挂载, 可组合

- 自动化: 自动部署,自动重启,自动复制,自动伸缩/扩展

Kubernetes 不是什么?

Kubernetes并不是传统的PaaS(平台即服务)系统。

- Kubernetes不限制支持应用的类型,不限制应用框架。不限制受支持的语言runtimes (例如, Java, Python, Ruby),满足12-factor applications 。不区分 “apps” 或者“services”。 Kubernetes支持不同负载应用,包括有状态、无状态、数据处理类型的应用。只要这个应用可以在容器里运行,那么就能很好的运行在Kubernetes上。

- Kubernetes不提供中间件(如message buses)、数据处理框架(如Spark)、数据库(如Mysql)或者集群存储系统(如Ceph)作为内置服务。但这些应用都可以运行在Kubernetes上面。

- Kubernetes不部署源码不编译应用。持续集成的 (CI)工作流方面,不同的用户有不同的需求和偏好的区域,因此,我们提供分层的 CI工作流,但并不定义它应该如何工作。

- Kubernetes允许用户选择自己的日志、监控和报警系统。

- Kubernetes不提供或授权一个全面的应用程序配置 语言/系统(例如,jsonnet)。

- Kubernetes不提供任何机器配置、维护、管理或者自修复系统。

PaaS是(Platform as a Service)的缩写,是指平台即服务。云计算时代的服务器平台或者开发环境作为服务进行提供就成为了PaaS(Platform as a Service)。典型的PaaS 是一个开发平台框架,开发人员可以基于该框架进行构建,从而开发或自定义基于云的应用程序。 就像 Microsoft Excel 宏一样,PaaS 使开发人员能够使用内置软件组件创建应用程序。 包含可扩展性、高可用性和多租户功能等在内的云功能减少了开发人员的代码编写工作量。

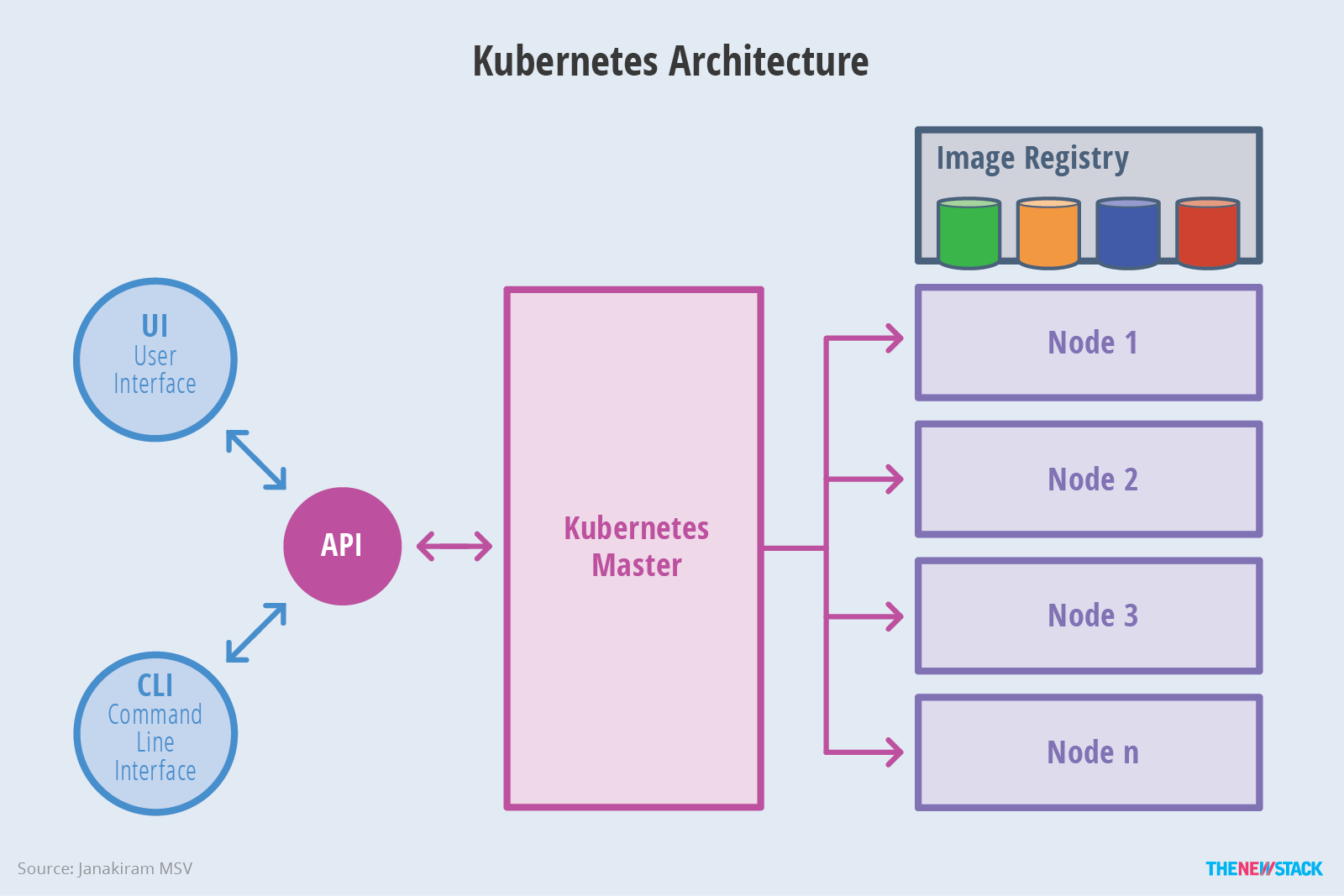

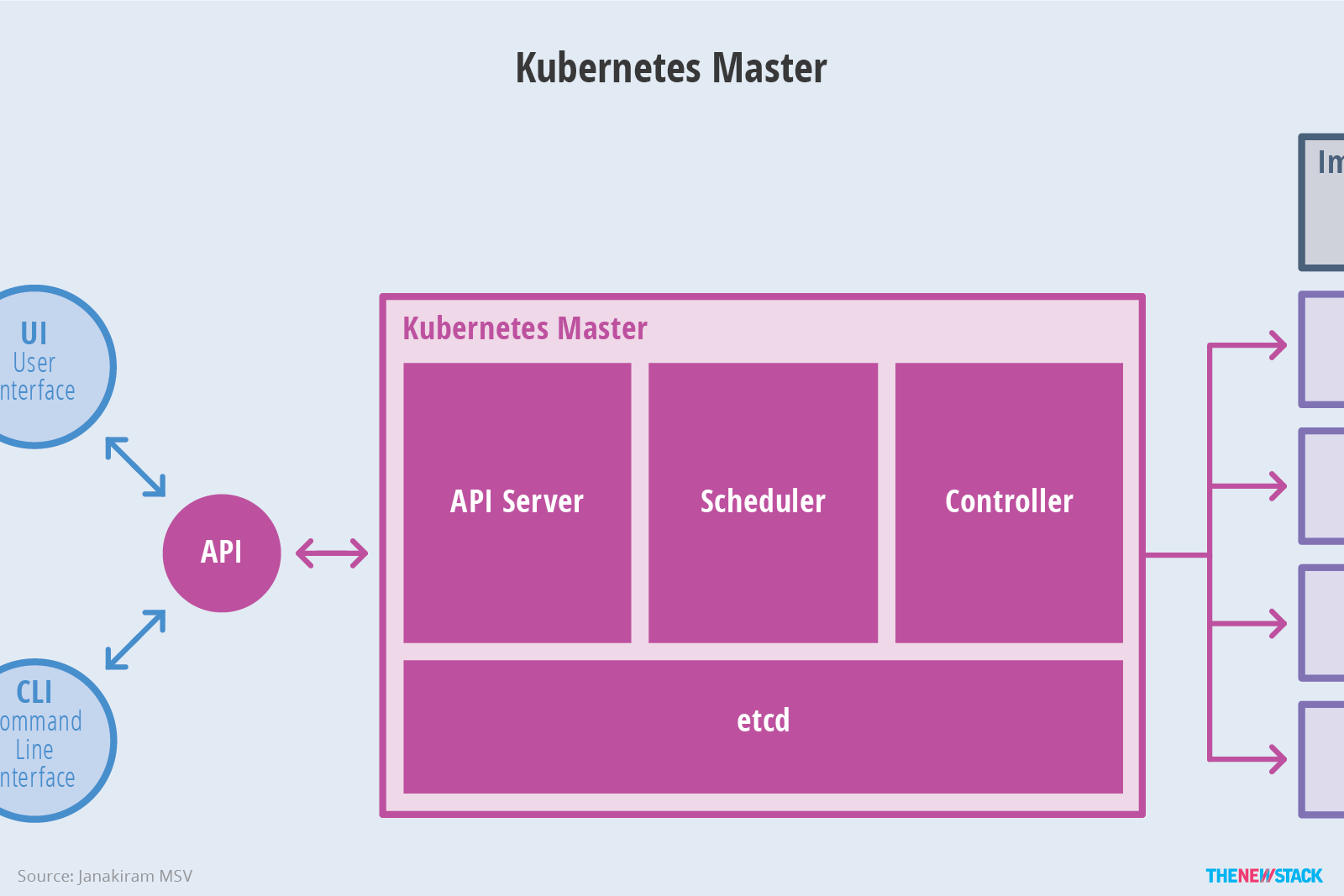

Kubernetes 架构

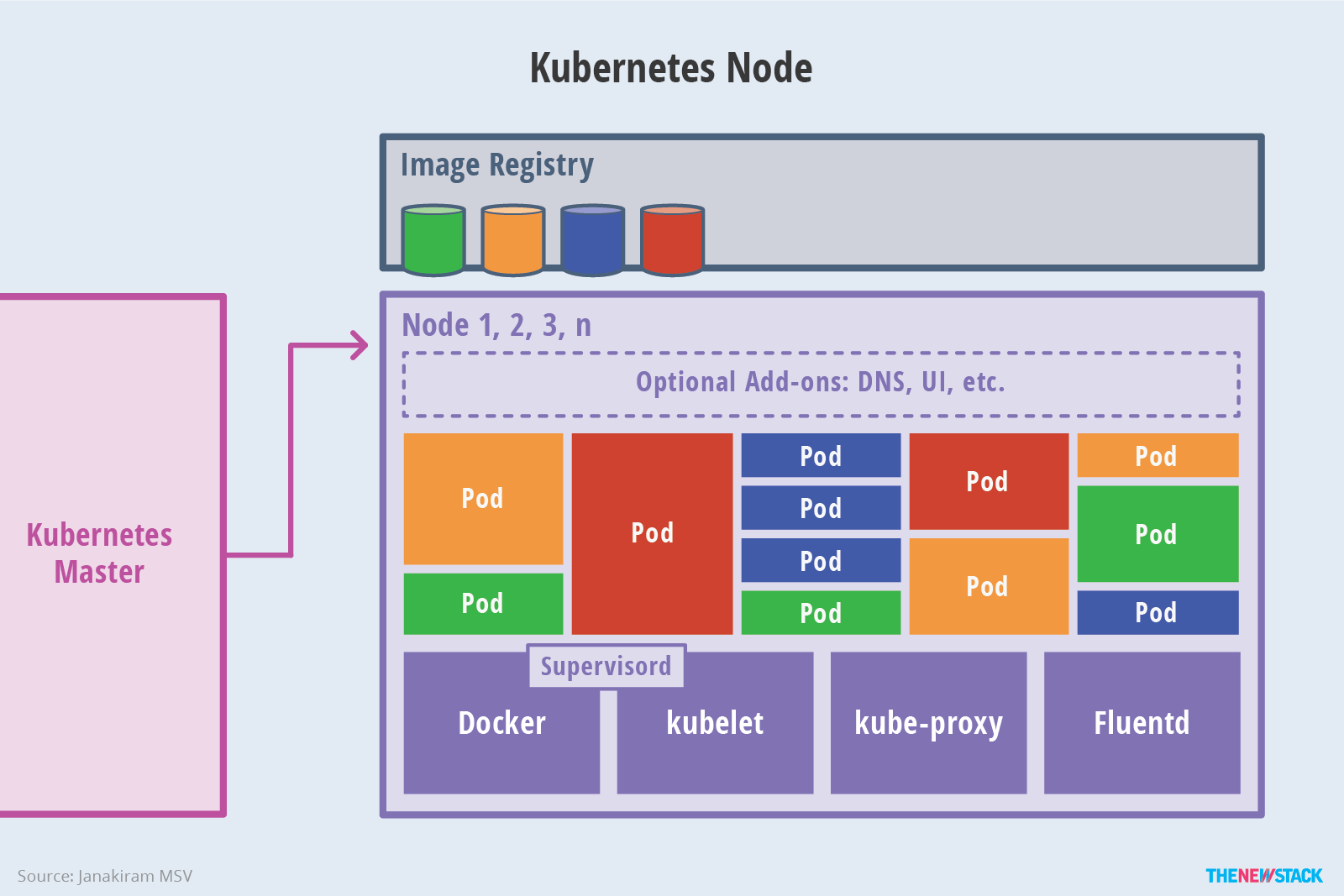

一个 Kubernetes 集群由一组被称作node的计算机组成。这些节点上运行 Kubernetes 所管理的容器化应用。

集群至少具有一个master节点和一个worker节点。

控制平面的组件

控制平面的组件(Control Plane Components)对集群做出全局决策(比如调度),以及检测和响应集群事件(例如,当不满足部署的 replicas 字段时,启动新的 pod )。

控制平面组件可以在集群中的任何节点上运行。为了简单起见,设置脚本通常会在同一个计算机上启动所有控制平面组件,并且不会在此计算机上运行用户容器。

- kube-apiserver,部署在master节点,负责提供 Kubernetes API服务,是Kubernetes 控制面的前端。各种客户端工具(CLI或UI) 以及Kubernetes其他组件可以通过它管理Cluster的各种资源。

- kube-scheduler,部署在master节点,按照预定的调度策略将Pod调度到相应的机器上。

- kube-controller-manager,部署在master节点,负责维护集群的状态,比如故障检测、自动扩展、滚动更新等。

- etcd,兼具一致性和高可用性的键值数据库,保存 Kubernetes 所有集群数据的后台数据库。

Worker组件

Worker组件在每个节点上运行(包括master节点),维护运行的 Pod 并提供 Kubernetes 运行环境。

- kubelet,集群中每个节点上都需要运行的代理,负责维护容器的生命周期,同时也负责Volume(CVI)和网络(CNI)的管理。kubelet 接收一组通过各类机制提供给它的 PodSpecs,确保这些 PodSpecs 中描述的容器处于运行状态且健康。

- kube-proxy,集群中每个节点上运行的网络代理,负责为Service提供cluster内部的服务发现和负载均衡。kube-proxy 维护节点上的网络规则。这些网络规则允许从集群内部或外部的网络会话与 Pod 进行网络通信。

- 容器运行环境(Container Runtime),容器运行环境,负责镜像管理以及Pod和容器的真正运行(CRI)。Kubernetes 支持多个容器运行环境: Dkubectlker、 containerd、cri-o、 rktlet 以及任何实现 Kubernetes CRI (容器运行环境接口)。

插件(Addons)

插件(Addons)使用 Kubernetes 资源 (DaemonSet , Deployment 等) 实现集群功能,插件资源属于 kube-system 命名空间。包涵如下插件:

- kubedns,一个 DNS 服务器,为 Kubernetes 服务提供 DNS 记录。

- 用户界面(Dashboard),为Kubernetes 集群提供Web UI。它使用户可以管理集群中运行的应用程序以及集群本身并进行故障排除。

- 容器资源监控,将关于容器的一些常见的时间序列度量值保存到一个集中的数据库中,并提供用于浏览这些数据的界面。例如Heapster。

- 集群层面日志,负责将容器的日志数据保存到一个集中的日志存储中,该存储能够提供搜索和浏览接口。例如,Fluentd-elasticsearch。

- 网络插件 ,是实现容器网络接口(CNI)规范的软件组件。它们负责为 Pod 分配 IP 地址,并使这些 Pod 能在集群内部相互通信。

为什么master上也有kubelet和kube-proxy呢?

因为Master同时也是一个worker。

Master上也可以运行应用,几乎所有的Kubernetes组件本身都运行在Pod里 。例如etcd,kube-apiserver,kube-controller-manager等。

kubelet是唯一没有以容器形式运行的Kubernetes组件。

Kubernetes 安装

详情参考:生产环境

本课程采用kubeadm部署集群。

环境说明

-

vmware workstation 17

-

ubuntu-24.04-live-server-amd64

-

kubernetes 1.30.2

虚拟机硬件配置

- 2 cpu

- 4G memory

- 1个NAT 网卡

- 1个100G 硬盘

节点规划

| 节点 | IP | 角色 |

|---|---|---|

| master30.laoma.cloud | 10.1.8.30 | master |

| worker31.laoma.cloud | 10.1.8.31 | worker |

| worker32.laoma.cloud | 10.1.8.32 | worker |

准备模板

系统准备

安装系统

Ubuntu 2404 系统最小化安装,不需要swap分区,按以下要求分区。

- /boot 2G

- / 90G

配置仓库源

操作系统仓库换成华为云的仓库,速度更快。

root@ubuntu2404:~# cat > /etc/apt/sources.list.d/ubuntu.sources <<'EOF'

Types: deb

URIs: http://mirrors.huaweicloud.com/ubuntu/

Suites: noble noble-updates noble-backports

Components: main restricted universe multiverse

Signed-By: /usr/share/keyrings/ubuntu-archive-keyring.gpg

#Types: deb

#URIs: http://security.ubuntu.com/ubuntu/

#Suites: noble-security

#Components: main restricted universe multiverse

#Signed-By: /usr/share/keyrings/ubuntu-archive-keyring.gpg

EOF

containerd 仓库

# 导入 containerd 仓库 key

root@ubuntu2404:~# curl -fsSL https://mirrors.huaweicloud.com/docker-ce/linux/ubuntu/gpg | gpg --dearmour -o /etc/apt/trusted.gpg.d/containerd.gpg

# 添加 containerd 仓库

root@ubuntu2404:~# cat << 'EOF' > /etc/apt/sources.list.d/docker-ce.list

deb [arch=amd64] https://mirrors.huaweicloud.com/docker-ce/linux/ubuntu noble stable

EOF

Kubernetes 官方变更了仓库的存储路径以及使用方式,使用 1.28 及以上版本,需按照新版配置方法进行配置。

该文档示例为配置 1.30 版本,如需其他版本请在对应位置字符串替换即可。比如需要安装 1.29 版本,则需要将如下配置中的 v1.30 替换成 v1.29。

目前该源支持 v1.24 - v1.35 版本,后续版本会持续更新。

# 添加 kubernetes 仓库 key

root@ubuntu2404:~# curl -fsSL https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.30/deb/Release.key | gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

# 添加 kubernetes 仓库

root@ubuntu2404:~# echo "deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.30/deb/ /" > /etc/apt/sources.list.d/kubernetes.list

安装基础软件包

root@ubuntu2404:~# apt update && apt install -y vim lrzsz bash-completion open-vm-tools apt-transport-https sshpass

# vim:强大的终端文本编辑器,用于编辑配置文件、代码等。

# lrzsz:便于xshell快速上传和下载文件

# bash-completion:命令行自动补全工具,按 Tab 可补全命令、参数、文件名。

# open-vm-tools:VMware 虚拟机工具,实现时间同步、文件拖拽、分辨率适配等。

# apt-transport-https:让 apt 支持通过 HTTPS 下载软件包,用于访问安全源。

# sshpass:非交互式 SSH 登录工具,可在命令行直接带密码登录,多用于脚本自动化。

设置 IP

root@ubuntu2404:~# mkdir /etc/netplan/origin

root@ubuntu2404:~# mv /etc/netplan/*yaml /etc/netplan/origin

root@ubuntu2404:~# cat > /etc/netplan/00-static.yaml <<EOF

network:

ethernets:

ens33:

dhcp4: no

addresses:

- 10.1.8.30/24

routes:

- to: default

via: 10.1.8.2

nameservers:

addresses:

- 10.1.8.2

- 223.5.5.5

version: 2

EOF

root@ubuntu2404:~# chmod 600 /etc/netplan/00-static.yaml

root@ubuntu2404:~# netplan apply

设置 /etc/hosts

root@ubuntu2404:~# cat >> /etc/hosts << 'EOF'

###### kubernetes #####

10.1.8.30 master30.laoma.cloud master30

10.1.8.31 worker31.laoma.cloud worker31

10.1.8.32 worker32.laoma.cloud worker32

EOF

关闭 swap

如果有 swap 分区,需要关闭。kubernetes不需要swap分区。

root@ubuntu2404:~# swapoff -a && sed -i '/^.*swap/d' /etc/fstab

root@ubuntu2404:~# rm -f /swap.img

配置时区对时

root@ubuntu2404:~# timedatectl set-timezone Asia/Shanghai

root@ubuntu2404:~# apt-get install -y chrony

root@ubuntu2404:~# systemctl enable chrony --now

设置 ssh

# 避免ssh服务器对客户端IP进行反向解析为域名,客户端可以快速与服务器建立连接

root@ubuntu2404:~# echo 'UseDNS no' >> /etc/ssh/sshd_config

# 避免ssh客户端校验服务器公钥,否则首次连接需要交互输入yes

root@ubuntu2404:~# echo 'StrictHostKeyChecking no' >> /etc/ssh/ssh_config

# 生成秘钥

root@ubuntu2404:~# ssh-keygen -N '' -f ~/.ssh/id_rsa -t rsa

# 配置免密登录自己:替换password为实际密码

root@ubuntu2404:~# sshpass -p password ssh-copy-id root@localhost

配置 IPVS

# 1. 安装 ipvs 依赖包

root@ubuntu2404:~# apt install -y iptables ipvsadm ipset conntrack

# 2-1. 加载基础网络模块:临时加载(立即生效)

root@ubuntu2404:~# modprobe overlay

root@ubuntu2404:~# modprobe br_netfilter

# 模块功能说明:

# br_netfilter:允许桥接设备(Linux 网桥)通过 iptables 过滤,是 Kubernetes 网络通信必需。

# overlay:Overlay 文件系统模块,容器运行时(containerd/docker)分层镜像必备。

# 2-2. 加载 ipvs 内核模块:临时加载(立即生效)

root@ubuntu2404:~# modprobe ip_vs

root@ubuntu2404:~# modprobe ip_vs_rr

root@ubuntu2404:~# modprobe ip_vs_wrr

root@ubuntu2404:~# modprobe ip_vs_lc

root@ubuntu2404:~# modprobe ip_vs_sh

root@ubuntu2404:~# modprobe nf_conntrack

# 模块功能说明:

# ip_vs:IPVS 核心模块,实现四层负载均衡,是 kube-proxy ipvs 模式的基础。

# ip_vs_rr:IPVS 轮询调度算法,按顺序依次分发请求。

# ip_vs_wrr:加权轮询,按后端节点权重分配流量。

# ip_vs_lc:最少连接调度,优先发给连接数最少的节点。

# ip_vs_sh:源地址哈希,保证同一客户端 IP 始终访问同一后端。

# nf_conntrack:连接跟踪,记录网络连接状态,保证数据包正确转发。

# 3 永久加载模块(重启生效)

# systemd-modules-load.service 会自动加载改配置文件

root@ubuntu2404:~# cat > /etc/modules-load.d/k8s-net.conf << EOF

# K8s 基础网络

br_netfilter

overlay

# IPVS 必需

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_lc

ip_vs_sh

nf_conntrack

EOF

配置其他内核参数

# 配置内核参数,将桥接的IPv4流量传递到iptables的链

root@ubuntu2404:~# cat > /etc/sysctl.d/k8s.conf << 'EOF'

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

vm.swappiness=0

EOF

# 内核参数说明:

# net.bridge.bridge-nf-call-iptables=1,启用iptables网络包过滤功能

# net.bridge.bridge-nf-call-ip6tables=1,启用ip6tables的网络包过滤功能

# net.ipv4.ip_forward=1,开启路由转发,转发IPv4的数据包

# vm.swappiness=0,禁止使用交换分区

# 内核参数立刻生效

root@ubuntu2404:~# sysctl -p /etc/sysctl.d/k8s.conf

k8s 准备

配置 containerd

root@ubuntu2404:~# apt-get install -y containerd.io=1.7.20-1 cri-tools

# 设置crictl的runtime-endpoint

root@ubuntu2204:~# crictl config runtime-endpoint unix:///var/run/containerd/containerd.sock

root@ubuntu2404:~# containerd config default > /etc/containerd/config.toml

# 修改 SystemdCgroup 和 sandbox_image

root@ubuntu2404:~# sed -i 's/SystemdCgroup = false/SystemdCgroup = true/' /etc/containerd/config.toml

root@ubuntu2404:~# sed -i 's|sandbox_image = ".*"|sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.9"|' /etc/containerd/config.toml

# 配置镜像仓库加速

root@ubuntu2404:~# vim /etc/containerd/config.toml

# 查找 mirrors行

[plugins."io.containerd.grpc.v1.cri".registry.mirrors]

# 添加如下四行记录,注意缩进

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]

endpoint = ["https://docker.m.daocloud.io","https://09def58152000fc00ff0c00057bad7e0.mirror.swr.myhuaweicloud.com"]

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."registry.k8s.io"]

endpoint = ["https://k8s.m.daocloud.io","https://09def58152000fc00ff0c00057bad7e0.mirror.swr.myhuaweicloud.com"]

# 重启服务

root@ubuntu2404:~# systemctl restart containerd.service

# containerd 服务,默认已经设置开机启动,并启动

crictl 走的是 containerd CRI 接口,会读取 /etc/containerd/config.toml 里的 registry.mirrors 配置。

下载测试:

root@ubuntu2404:~# crictl pull hello-world

安装 nerdctl 和 cni plugin

nerdctl 项目地址:https://github.com/containerd/nerdctl/releases

cni 插件项目地址:https://github.com/containernetworking/plugins/releases

# 下载并安装

root@ubuntu2404:~# wget http://192.168.46.100/01.softwares/03.stage-3/nerdctl-1.7.7-linux-amd64.tar.gz

root@ubuntu2404:~# tar -xf nerdctl-1.7.7-linux-amd64.tar.gz -C /usr/bin/

# 下载 nerdctl 所需要的 cni 插件

root@ubuntu2404:~# wget http://192.168.46.100/01.softwares/03.stage-3/cni-plugins-linux-amd64-v1.6.0.tgz

root@ubuntu2404:~# mkdir -p /opt/cni/bin

root@ubuntu2404:~# tar -xf cni-plugins-linux-amd64-v1.6.0.tgz -C /opt/cni/bin

nerdctl 走的是 containerd 原生 API,不会读取 CRI 专属的 registry 配置,而是用自己独立的镜像源配置。

nerdctl 有自己的配置文件,需要单独配置 Docker Hub 加速。

# 配置 docker.io 镜像加速

mkdir -p /etc/containerd/certs.d/docker.io

cat > /etc/containerd/certs.d/docker.io/hosts.toml << EOF

server = "https://registry-1.docker.io"

[host."https://docker.m.daocloud.io"]

capabilities = ["pull", "resolve"]

[host."https://09def58152000fc00ff0c00057bad7e0.mirror.swr.myhuaweicloud.com"]

capabilities = ["pull", "resolve"]

EOF

# 配置 registry.k8s.io 镜像加速

mkdir -p /etc/containerd/certs.d/registry.k8s.io

cat > /etc/containerd/certs.d/registry.k8s.io/hosts.toml << EOF

server = "https://registry.k8s.io"

[host."https://k8s.m.daocloud.io"]

capabilities = ["pull", "resolve"]

[host."https://09def58152000fc00ff0c00057bad7e0.mirror.swr.myhuaweicloud.com"]

capabilities = ["pull", "resolve"]

EOF

下载测试:

root@ubuntu2404:~# nerdctl pull hello-world

安装 kubernetes 软件包

# 查看版本

root@ubuntu2404:~# apt list kubeadm -a|head

WARNING: apt does not have a stable CLI interface. Use with caution in scripts.

Listing... Done

kubeadm/unknown 1.30.14-1.1 amd64

kubeadm/unknown 1.30.13-1.1 amd64

kubeadm/unknown 1.30.12-1.1 amd64

kubeadm/unknown 1.30.11-1.1 amd64

kubeadm/unknown 1.30.10-1.1 amd64

kubeadm/unknown 1.30.9-1.1 amd64

kubeadm/unknown 1.30.8-1.1 amd64

kubeadm/unknown 1.30.7-1.1 amd64

kubeadm/unknown 1.30.6-1.1 amd64

kubeadm/unknown 1.30.5-1.1 amd64

kubeadm/unknown 1.30.4-1.1 amd64

kubeadm/unknown 1.30.3-1.1 amd64

kubeadm/unknown 1.30.2-1.1 amd64

kubeadm/unknown 1.30.1-1.1 amd64

kubeadm/unknown 1.30.0-1.1 amd64

......

root@ubuntu2404:~# apt install -y kubeadm=1.30.2-1.1 kubelet=1.30.2-1.1 kubectl=1.30.2-1.1

# 设置 kubelet 服务

root@ubuntu2404:~# systemctl enable kubelet --now

此时kubelet服务处于activating,等 kubernetes 安装完成后状态变更为active。

配置相关命令补全

# 配置 crictl 命令自动补全

root@ubuntu2404:~# mkdir /etc/bash_completion.d

root@ubuntu2404:~# crictl completion bash > /etc/bash_completion.d/crictl

root@ubuntu2404:~# source /etc/bash_completion.d/crictl

# 配置 nerdctl 命令自动补全

root@ubuntu2404:~# nerdctl completion bash > /etc/bash_completion.d/nerdctl

root@ubuntu2404:~# echo 'export CONTAINERD_NAMESPACE=k8s.io' >> /etc/bash_completion.d/nerdctl

root@ubuntu2404:~# source /etc/bash_completion.d/nerdctl

==注意==:此处必须设置变量 CONTAINERD_NAMESPACE,否则 nerdctl 默认将镜像导入到 default 命名空间,导致 k8s 无法使用镜像。k8s 默认使用 k8s.io 命名空间中镜像。

# 配置 kubectl 命令补全

root@ubuntu2404:~# kubectl completion bash > /etc/bash_completion.d/kubectl

root@ubuntu2404:~# source /etc/bash_completion.d/kubectl

# 配置 kubeadm 命令补全

root@ubuntu2404:~# kubeadm completion bash > /etc/bash_completion.d/kubeadm

root@ubuntu2404:~# source /etc/bash_completion.d/kubeadm

关闭虚拟机

root@ubuntu2404:~# init 0

准备节点

### 隆虚拟机

# 采用完全克隆方法克隆出其他3台虚拟机。

# 3台虚拟机重新设置自己的的主机名和网络。

# master30 节点

root@master30:~# hostnamectl hostname master30.laoma.cloud

root@master30:~# cat > /etc/netplan/00-static.yaml <<EOF

network:

ethernets:

ens32:

dhcp4: no

addresses:

- 10.1.8.30/24

routes:

- to: default

via: 10.1.8.2

nameservers:

addresses:

- 10.1.8.2

- 223.5.5.5

version: 2

EOF

root@master30:~# netplan apply

# worker31 节点

root@worker31:~# hostnamectl hostname worker31.laoma.cloud

root@worker31:~# cat > /etc/netplan/00-static.yaml <<EOF

network:

ethernets:

ens32:

dhcp4: no

addresses:

- 10.1.8.31/24

routes:

- to: default

via: 10.1.8.2

nameservers:

addresses:

- 10.1.8.2

- 223.5.5.5

version: 2

EOF

root@worker31:~# netplan apply

# worker32 节点

root@worker32:~# hostnamectl hostname worker32.laoma.cloud

root@worker32:~# cat > /etc/netplan/00-static.yaml <<EOF

network:

ethernets:

ens32:

dhcp4: no

addresses:

- 10.1.8.32/24

routes:

- to: default

via: 10.1.8.2

nameservers:

addresses:

- 10.1.8.2

- 223.5.5.5

version: 2

EOF

root@worker32:~# netplan apply

配置集群

下载镜像

初始化集群过程中,需要下载镜像,这里我们提前下载。

root@master30:~# kubeadm config images pull --kubernetes-version=v1.30.2

[config/images] Pulled registry.k8s.io/kube-apiserver:v1.30.2

[config/images] Pulled registry.k8s.io/kube-controller-manager:v1.30.2

[config/images] Pulled registry.k8s.io/kube-scheduler:v1.30.2

[config/images] Pulled registry.k8s.io/kube-proxy:v1.30.2

[config/images] Pulled registry.k8s.io/coredns/coredns:v1.11.1

[config/images] Pulled registry.k8s.io/pause:3.9

[config/images] Pulled registry.k8s.io/etcd:3.5.12-0

备选方案-使用阿里云仓库镜像:

root@master30:~# kubeadm config images pull --kubernetes-version=v1.30.2 --image-repository registry.aliyuncs.com/google_containers

[config/images] Pulled registry.k8s.io/kube-apiserver:v1.30.2

[config/images] Pulled registry.k8s.io/kube-controller-manager:v1.30.2

[config/images] Pulled registry.k8s.io/kube-scheduler:v1.30.2

[config/images] Pulled registry.k8s.io/kube-proxy:v1.30.2

[config/images] Pulled registry.k8s.io/coredns/coredns:v1.11.1

[config/images] Pulled registry.k8s.io/pause:3.9

[config/images] Pulled registry.k8s.io/etcd:3.5.12-0

初始化集群

root@master30:~# kubeadm init --kubernetes-version=v1.30.2 --pod-network-cidr=10.224.0.0/16

备选方案-使用阿里云仓库镜像初始化集群:

root@master30:~# kubeadm init --kubernetes-version=v1.30.2 --pod-network-cidr=10.224.0.0/16 --image-repository registry.aliyuncs.com/google_containers

初始化结果如下

[init] Using Kubernetes version: v1.30.2

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master30.laoma.cloud] and IPs [10.96.0.1 10.1.8.30]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master30.laoma.cloud] and IPs [10.1.8.30 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master30.laoma.cloud] and IPs [10.1.8.30 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "super-admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests"

[kubelet-check] Waiting for a healthy kubelet. This can take up to 4m0s

[kubelet-check] The kubelet is healthy after 502.398615ms

[api-check] Waiting for a healthy API server. This can take up to 4m0s

[api-check] The API server is healthy after 7.50265248s

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master30.laoma.cloud as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node master30.laoma.cloud as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: ybenal.6mszwb1nf8nck72g

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 10.1.8.30:6443 --token mi0yt8.1tzza4q64dr8y3pc \

--discovery-token-ca-cert-hash sha256:5606e09618330aee8859abe3ea4cd8734f9b540630048a6e1c3aaf6c54d486fd

选项说明:

--image-repository registry.aliyuncs.com/google_containers,指定镜像下载位置

--kubernetes-version=v1.30.2,指定版本

--pod-network-cidr=10.224.0.0/16,指定Pod网络的范围。 Kubernetes支持多种网络 方案, 而且不同网络方案对--pod-network-cidr有自己的要求。

--apiserver-advertise-address指明用哪个interface与Cluster的其他节点通信。 如果master有多个interface, 建议明确指定, 如果不指定, kubeadm会自动选择有默认网关的interface。

初始化过程说明:

- kubeadm执行初始化前的检查。

- 下载组件的Docker镜像。 这一步可能会花一些时间, 主要取决于网络质量。

- 生成token和证书。

- 生成KubeConfig文件, kubelet需要用这个文件与master通信。

- 安装master组件。

- 安装附加组件kube-proxy和CoreDNS。

- Kubernetes master初始化成功。

- 提示如何配置kubectl。

- 提示如何安装Pod网络。

- 提示如何注册其他节点到Cluster。

配置集群

配置 kubectl 凭据

- kubectl默认使用~/.kube/config文件中凭据信息管理kubernetes。

root@master30:~# mkdir -p $HOME/.kube

root@master30:~# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

root@master30:~# chown $(id -u):$(id -g) $HOME/.kube/config

- 如果环境变量KUBECONFIG存在,则优先使用境变量KUBECONFIG设置的值。

root@master30:~# mv .kube/config .

root@master30:~# export KUBECONFIG=/root/config

root@master30:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master30.laoma.cloud NotReady control-plane,master 5m2s v1.30.2

# 等网络配置完成后,STATUS状态由NotReady变更为Ready

- 还可以通过选项

--kubeconfig=''明确指定凭据文件位置。

root@master30:~# kubectl get nodes --kubeconfig /root/config

kubernetes对凭据文件名没有要求。

root@master30:~# mv config kube.conf

root@master30:~# kubectl get nodes --kubeconfig kube.conf

配置网络

这里采用 calico 网络。

官方地址:http://projectcalico.org 或者 https://www.tigera.io/project-calico/

产品文档:https://projectcalico.docs.tigera.io/about/about-calico

下载 calico

root@master30:~# wget --no-check-certificate https://raw.githubusercontent.com/projectcalico/calico/v3.30.7/manifests/calico.yaml

修改 pod 网络

# 查看集群 pod 网络范围

root@master30:~# kubectl get cm -n kube-system kubeadm-config -o yaml|grep podSubnet

podSubnet: 10.224.0.0/16

# 更改 calico.yml,确保 CALICO_IPV4POOL_CIDR 与集群初始化的pod网络一致。

root@master30:~# vim calico.yaml

#############################################

- name: CALICO_IPV4POOL_CIDR

value: "10.224.0.0/16"

#############################################

# Disable file logging so `kubectl logs` works.

- name: CALICO_DISABLE_FILE_LOGGING

value: "true"

原先这两行是注释行,注意对齐。

部署 calico 网络

root@master30:~# kubectl apply -f calico.yaml

验证 pod 状态

root@master30:~# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-56fcbf9d6b-v6qsn 1/1 Running 0 28m

kube-system calico-node-vc9v6 1/1 Running 0 28m

kube-system coredns-6d8c4cb4d-9qdxg 1/1 Running 0 43m

kube-system coredns-6d8c4cb4d-wwfmx 1/1 Running 0 43m

kube-system etcd-master30.laoma.cloud 1/1 Running 0 43m

kube-system kube-apiserver-master30.laoma.cloud 1/1 Running 0 43m

kube-system kube-controller-manager-master30.laoma.cloud 1/1 Running 0 43m

kube-system kube-proxy-8b7tn 1/1 Running 0 43m

kube-system kube-scheduler-master30.laoma.cloud 1/1 Running 0 43m

节点加入集群

# 节点 worker31 加入集群

root@worker31:~# kubeadm join 10.1.8.30:6443 --token mi0yt8.1tzza4q64dr8y3pc \

--discovery-token-ca-cert-hash sha256:5606e09618330aee8859abe3ea4cd8734f9b540630048a6e1c3aaf6c54d486fd

[preflight] Running pre-flight checks

[WARNING SystemVerification]: missing optional cgroups: blkio

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

# 节点 worker32 加入集群

root@worker32:~# kubeadm join 10.1.8.30:6443 --token mi0yt8.1tzza4q64dr8y3pc \

--discovery-token-ca-cert-hash sha256:5606e09618330aee8859abe3ea4cd8734f9b540630048a6e1c3aaf6c54d486fd

如果没有保存初始化界面中加入集群命令,可以通过以下命令获取加入集群命令:

root@master30:~# kubeadm token create --print-join-command

kubeadm join 10.1.8.30:6443 --token dzpuca.8lqxqqydwskroabx --discovery-token-ca-cert-hash sha256:5606e09618330aee8859abe3ea4cd8734f9b540630048a6e1c3aaf6c54d486fd

验证部署

# 查看集群信息

root@master30:~# kubectl cluster-info

Kubernetes control plane is running at https://10.1.8.30:6443

CoreDNS is running at https://10.1.8.30:6443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

# 查看版本

root@master30:~# kubectl version

Client Version: v1.30.2

Kustomize Version: v5.0.4-0.20230601165947-6ce0bf390ce3

Server Version: v1.30.2

# 查看节点状态

root@master30:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master30.laoma.cloud Ready control-plane 9h v1.30.2

worker31.laoma.cloud Ready <none> 8h v1.30.2

worker32.laoma.cloud Ready <none> 8h v1.30.2

节点的状态为 Ready,必须满足以下条件:

- 网络配置完成

- 节点启动 kubelet 服务

- swap 关闭

- SELinux 关闭

# 查看 pod 状态

root@master30:~# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-7cb4fd5784-jx2xl 1/1 Running 0 11m

kube-system calico-node-4b6s8 1/1 Running 0 11m

kube-system calico-node-bsr7v 1/1 Running 0 11m

kube-system calico-node-v8jdn 1/1 Running 0 11m

kube-system coredns-66f779496c-4j88h 1/1 Running 0 13m

kube-system coredns-66f779496c-fnb8m 1/1 Running 0 13m

kube-system etcd-master30.laoma.cloud 1/1 Running 0 13m

kube-system kube-apiserver-master30.laoma.cloud 1/1 Running 0 13m

kube-system kube-controller-manager-master30.laoma.cloud 1/1 Running 0 13m

kube-system kube-proxy-27vl2 1/1 Running 0 11m

kube-system kube-proxy-npv9h 1/1 Running 0 11m

kube-system kube-proxy-q2qrs 1/1 Running 0 11m

kube-system kube-scheduler-master30.laoma.cloud 1/1 Running 0 13m